How does lens selection in a vision system affect overall detection performance?

In machine vision systems, the lens is often regarded as the camera’s “eye.” However, when designing systems, many engineers tend to focus too much on the camera’s pixel count and sensor model, while overlooking the importance of lens selection. In fact, the choice of lens directly determines how optical signals reach the sensor, thereby significantly affecting the system’s resolution, image quality, and detection accuracy.

I. The Choice of Lens Determines the System's Actual Resolution

1. Lens Resolution and Camera Compatibility

Pairing a high-resolution camera with a low-resolution lens is like using a magnifying glass to view blurry handwriting—the final image will still be blurry. The lens’s optical resolution (typically expressed in lines per millimeter) must be at least equal to the camera’s Nyquist frequency; otherwise, the lens will act as a bottleneck, limiting the system’s overall resolution.

2. The Impact of Sensor Size and Focal Length on Field of View

The lens’s imaging sensor must be larger than or equal to the camera’s sensor size; otherwise, vignetting or a sudden drop in edge resolution will occur. At the same time, the choice of focal length determines the field of view and working distance: a short focal length provides a wide field of view, while a long focal length offers greater detail. Choosing the wrong focal length can result in the target object occupying too small a portion of the frame (insufficient detail) or too large a portion (failing to capture all features).

II. Lens Specifications Directly Affect Image Quality

1. Distortion and Measurement Reliability

Wide-angle lenses or low-quality lenses often exhibit significant optical distortion (barrel or pincushion). In vision tasks requiring precise measurement of dimensions, positions, or angles, distortion introduces systematic errors, and even with subsequent algorithmic correction, edge information is lost.

2. Contrast and Signal-to-Noise Ratio

A lens’s coating process, aperture size, and lens material affect light transmission and stray light suppression. An excessively large aperture introduces aberrations and chromatic dispersion, reducing edge contrast; an excessively small aperture causes diffraction blur. A suitable lens ensures clear, high-contrast edges at the local features of the object being measured, which is the foundation for stable image segmentation and feature extraction.

3. Depth of Field and Dynamic Range

For objects with varying heights (such as 3D workpieces or objects at different heights on a conveyor belt), the lens’s depth of field must be sufficient to cover the entire measurement range. If the depth of field is insufficient, out-of-focus blurring in certain areas will prevent the algorithm from reliably detecting features or may cause misclassification.

III. The Direct Impact of Lens Selection on Detection Accuracy

1. Edge Sharpness and Sub-pixel Accuracy

In high-precision positioning, distance measurement, or defect detection tasks, algorithms typically rely on sub-pixel fitting of edges. A lens with excellent aberration control produces steep edge transitions and concentrated gray-scale changes, thereby improving sub-pixel repeatability to the 0.01-pixel level; conversely, a poor-quality lens causes blurred edge gradients, leading to drift in fitting results.

2. Color Reproduction and Chromatic Aberration Control

For vision solutions that rely on color information (such as color mark detection or PCB color ring recognition), the lens’s chromatic aberration and color reproduction capabilities are critical. Low-quality lenses may cause red-blue shifts in high-frequency areas, leading to errors in color classification.

3. Thermal Drift and Long-Term Stability

In high-temperature, low-temperature, or prolonged operation environments, thermal expansion and contraction of lens materials can cause focal plane drift, leading to gradual image defocusing. Industrial-grade lenses typically employ thermal-compensated designs or locking mechanisms to maintain consistent detection accuracy, thereby preventing production interruptions or false alarms.

IV. Selecting the Right Lenses Improves System Efficiency and Reliability

1. Reduced Computational Load and Processing Time

A high-contrast, low-noise image with sharp edges often requires only simple thresholding or edge detection operators to reliably detect targets; in contrast, poor-quality images demand more complex filtering and compensation algorithms, which not only increase processing time but may also introduce false positives.

2. Reducing Maintenance and Parameter Adjustment Frequency

An appropriate optical configuration ensures system stability during batch changes or fluctuations in lighting, reducing the number of times on-site engineers need to repeatedly adjust focus, lighting, or algorithms.

3. Avoid Detection Blind Spots and Misclassifications

If the lens’s field of view, depth of field, or resolution is insufficient, certain subtle defects (such as scratches, foreign objects, or missing parts) may not be captured by the sensor at all, leading to missed defects. Conversely, excessive distortion or blurred edges may cause good products to be misclassified as defective. Both of these are critical errors that automated inspection solutions must avoid at all costs.

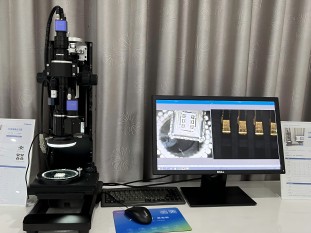

Product recommendation

TECHNICAL SOLUTION

MORE+You may also be interested in the following information

FREE CONSULTING SERVICE

Let’s help you to find the right solution for your project!

ASK POMEAS

ASK POMEAS  PRICE INQUIRY

PRICE INQUIRY  REQUEST DEMO/TEST

REQUEST DEMO/TEST  FREE TRIAL UNIT

FREE TRIAL UNIT  ACCURATE SELECTION

ACCURATE SELECTION  ADDRESS

ADDRESS Tel:+ 86-0769-2266 0867

Tel:+ 86-0769-2266 0867 Fax:+ 86-0769-2266 0867

Fax:+ 86-0769-2266 0867 E-mail:marketing@pomeas.com

E-mail:marketing@pomeas.com